The Direct Message

Tension: Autonomous vehicles are marketed as driverless, but a Senate investigation reveals they depend on hidden human operators — some based overseas without U.S. driver’s licenses — to function safely on American roads.

Noise: The industry frames remote assistance as a rare safety backup, and the lack of a shared definition of ‘intervention’ makes it impossible to compare companies or evaluate whether the technology actually works as advertised.

Direct Message: The refusal to release intervention data is itself the answer: if the cars truly drove themselves, the numbers would be a selling point, not a secret.

Every DMNews article follows The Direct Message methodology.

Robotaxi companies have faced scrutiny over how often human operators intervene in their autonomous vehicles, raising questions about transparency in the industry and the hidden human labor that may be supporting systems marketed as fully autonomous.

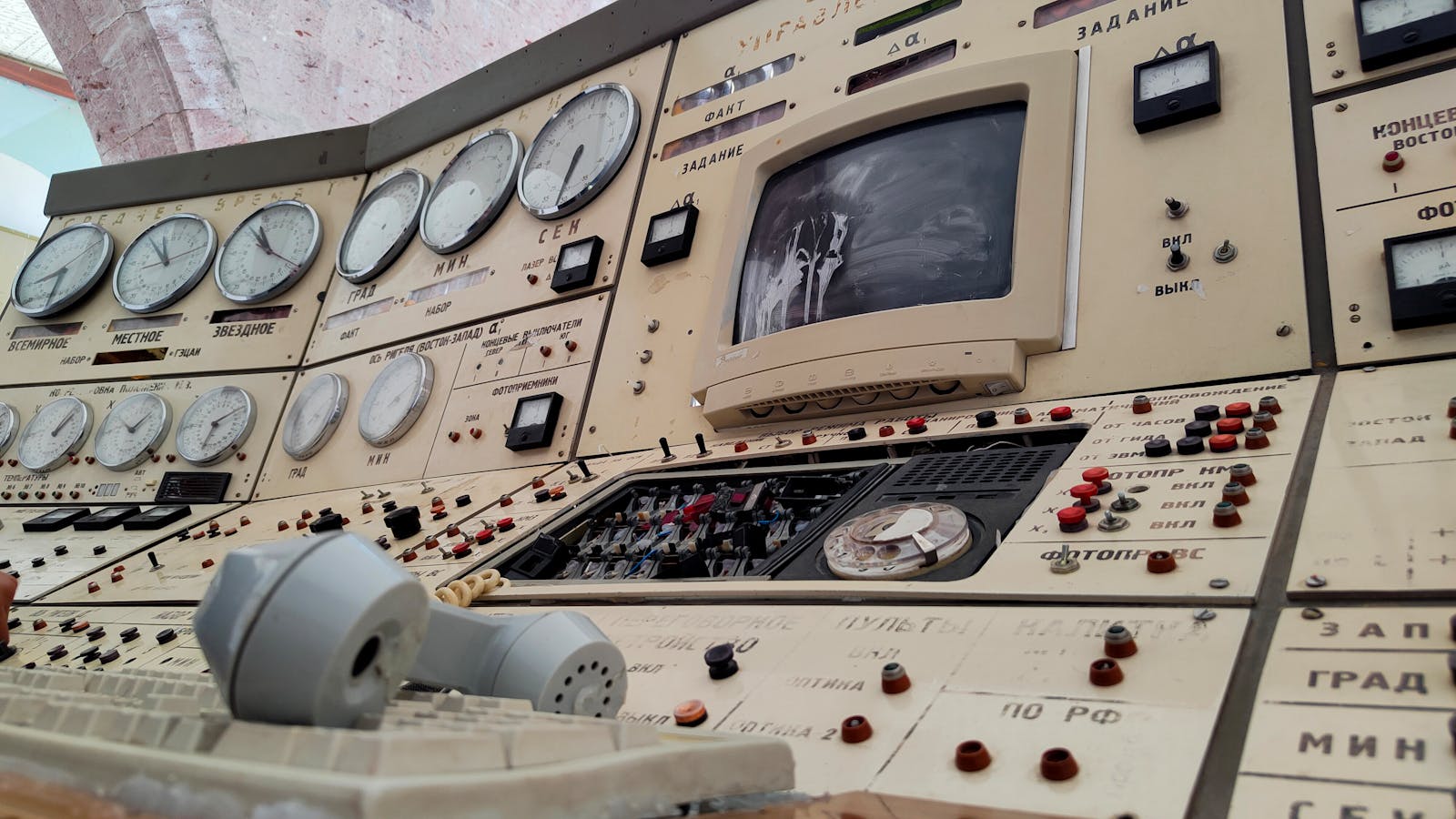

The promise of autonomous vehicles has always been sold on a clean binary: human drivers are flawed, machines are not. Remove the human, remove the error. But emerging reports suggest something far stranger. The humans were never removed. They were just relocated, made invisible, and in some cases, sent overseas.

Industry reports suggest that some autonomous vehicle companies use remote agents based outside the United States to assist with vehicle operations. In some cases, workers may lack U.S. driver’s licenses but can send prompts to vehicles under certain conditions. The distinction between “controlling” a car and “prompting” a car to move raises questions about what autonomous truly means.

Some companies reportedly allow remote operators to directly control vehicles at low speeds in specific situations, positioning this capability as an emergency function and safety net. However, the frequency of such interventions often remains undisclosed.

This is where the story fractures into something more uncomfortable than a regulatory fight. The refusal to release intervention rates isn’t a technical limitation. It’s a branding decision. Every number that quantifies human involvement chips away at the mythology of full autonomy. A robotaxi that needs a human operator once every hundred miles tells a different story than one that needs help once every ten thousand. And no company wants its number compared to a competitor’s.

The lack of a shared definition of “intervention” makes comparison between companies nearly impossible, which may be the point.

Consider what the word “autonomous” now means in practice. A vehicle classified as autonomous can have a human watching its camera feeds, interpreting traffic scenarios, and issuing movement commands. The human is not in the car. The human may not be in the country. The human may not hold a license to drive on public roads. But the vehicle is autonomous.

This represents a new kind of labor arbitrage. Autonomous vehicle companies route their hardest, most consequential decision-making moments to workers who may cost a fraction of what a U.S.-based safety driver would. The vehicle is marketed as needing no driver. The company’s headcount reflects engineers and executives. The people actually monitoring the cars exist in a category that has no clear regulatory home.

U.S. regulators are struggling to keep pace with autonomous vehicle deployment. Cities like Austin, San Francisco, and Phoenix have become testing grounds where millions of residents interact daily with vehicles they’re told are safe. The public can’t fully evaluate safety because comprehensive data doesn’t exist in any accessible form.

The challenge with remote-operated autonomous vehicles isn’t just the technology. It’s the assumption baked into the regulatory framework that a vehicle is either human-operated or machine-operated. Traffic laws were built around the idea that a person behind the wheel is responsible. A car where the person is thousands of miles away, watching on a screen, exists in a regulatory gray area.

The answer, for now, is almost none. Federal guidelines for autonomous vehicles remain largely voluntary. The National Highway Traffic Safety Administration has issued frameworks and investigations, but binding rules about remote operator qualifications, intervention reporting, and liability are still in development.

What makes this story a technology story rather than just a policy story is the way it exposes how “AI” functions as a branding layer. The term artificial intelligence implies a self-sufficient system. Autonomous implies the same. But the reality is a patchwork: machine learning handles routine driving, pre-mapped routes handle navigation, and when neither works, a human picks up the slack. The AI is real. The autonomy is partial. The marketing conflates the two.

This conflation has consequences beyond the roads. It shapes how people think about the labor behind AI products more broadly. Every chatbot has human trainers. Every recommendation algorithm has human moderators. Many autonomous vehicles have human operators. The pattern is consistent: technology companies build products that appear to run themselves, while the actual running is done by workers who are invisible by design.

Concerns are growing about vehicles whose safety records are proprietary secrets, and about remote operators, potentially many of them overseas, making split-second decisions about American traffic situations they may never have encountered in person.

The numbers the robotaxi companies refuse to release are not just metrics. They are the answer to a question that determines whether the entire premise of the industry is honest. If remote operators intervene rarely, the technology works and the companies have nothing to hide. If operators intervene frequently, the technology doesn’t work as advertised and the companies have everything to hide. The refusal to answer is, in a functional sense, the answer.

Transparency in autonomous vehicles is often framed as a consumer protection issue. It is that. But it is also something simpler and harder to regulate: a question about what we mean when we say a machine can do something on its own. A calculator is autonomous. A thermostat is autonomous. A car that needs a person to tell it how to handle complex traffic situations is something else entirely.

The gap between what these vehicles are and what they are sold as is not shrinking. Deployment is accelerating. More cities are approving robotaxi operations. More residents are sharing roads with vehicles whose safety records are proprietary secrets. Remote operators are making split-second decisions about traffic situations in time zones that don’t match the streets they’re monitoring.

The technology will improve. It always does. But the question was never whether autonomous vehicles would eventually work. The question is what happens in the years before they do, when the gap between capability and marketing is filled not by better algorithms but by workers watching camera feeds in locations far from the streets they’re monitoring.

“You can’t build public trust on classified data,” safety experts note. “Eventually someone gets hurt, and the first question everyone asks is: who was watching?”

That question has an answer. Someone was watching. They were watching from a screen, possibly thousands of miles away, possibly without extensive experience with local traffic patterns. And when incidents occur, companies often refuse to say how often such situations happen.

The autonomous future is not arriving as promised. It is arriving with human hands on remote controls, human eyes on monitors, and corporate refusals where public data should be. The car has no driver. But it is not driving itself.