The Direct Message

Tension: Silicon Valley predicts an AI jobs apocalypse within five years, but the single economic variable that determines whether AI creates or destroys jobs in any given industry has never been systematically measured.

Noise: AI “exposure” scores give workers the illusion of precision while measuring the wrong thing entirely. The same exposure level leads to job growth in one industry and mass layoffs in another, depending on a data point nobody collects.

Direct Message: The anxiety workers feel about AI is built on half the evidence. The missing half — price elasticity data that would reveal whether cheaper services create demand or just eliminate positions — is concrete and collectible. It simply hasn’t been anyone’s priority.

Every DMNews article follows The Direct Message methodology.

The gap between the alarm and the evidence is not a small one. It is a canyon.

Here is what’s actually known: the US government maintains a massive catalogue of thousands of individual job tasks, first launched in 1998 and updated regularly. Researchers can look at those tasks and estimate which ones AI could theoretically perform. That produces an “exposure” score. And research suggests that such scores, by themselves, tell us almost nothing about actual job displacement. “Exposure alone is a completely meaningless tool for predicting displacement,” according to University of Chicago economist Alex Imas in MIT Technology Review.

Meaningless. The word carries weight when it comes from someone who studies labor economics for a living. The problem isn’t that AI can’t perform certain tasks. The problem is that knowing this fact does not tell you whether workers will have more opportunities in three years or fewer.

To understand why, consider different scenarios in professional services. Some workers using AI tools since late 2024 have seen their output roughly triple. They draft documents in a third of the time. They process information at a speed that would have seemed absurd five years ago. Their jobs are highly “exposed” to AI by any standard metric. But some report being busier than ever, taking on clients they would have previously turned away. Small firms that couldn’t afford dedicated help are now hiring at lower per-project rates, and the volume more than compensates. High exposure. Zero displacement.

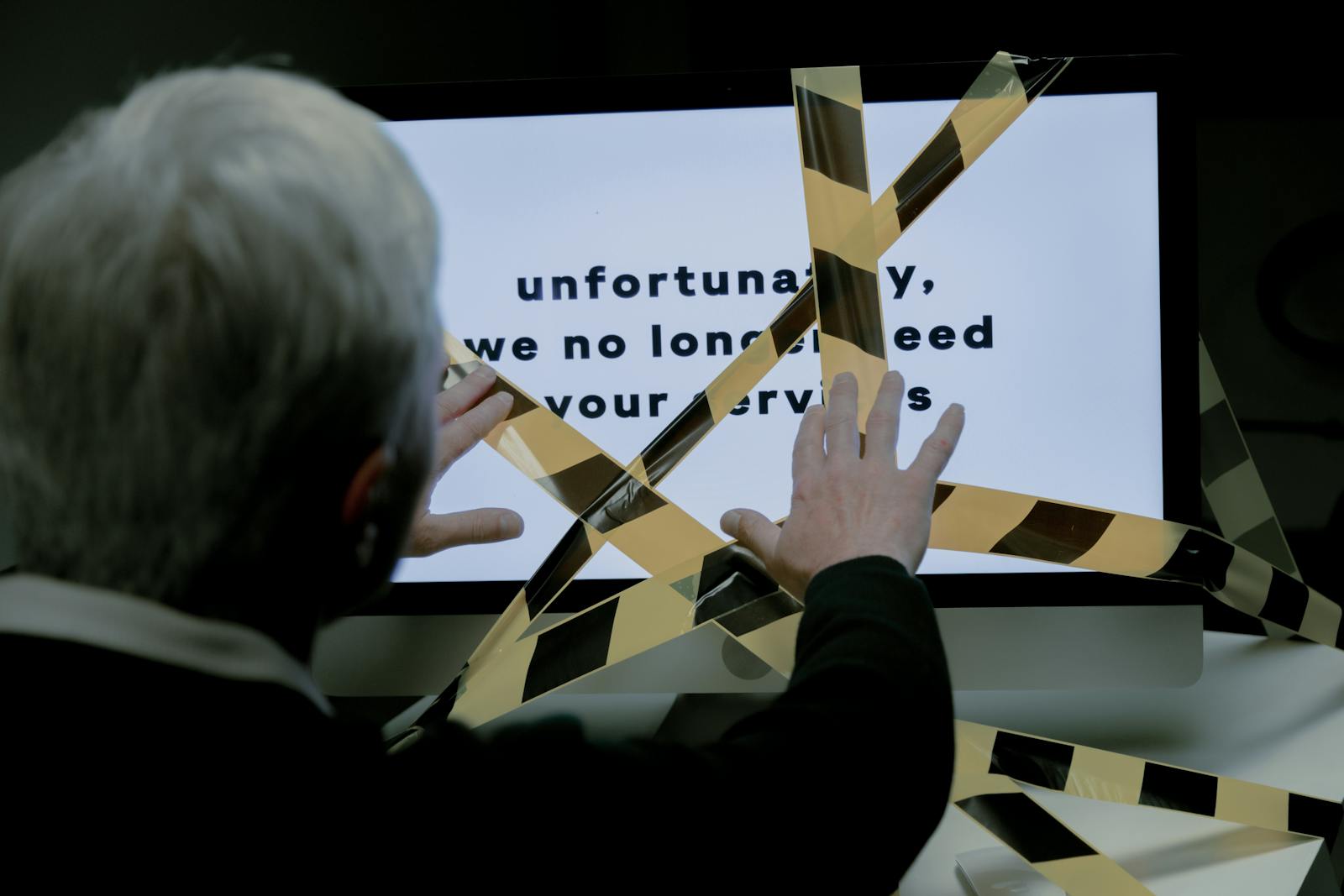

Contrast this with other sectors where companies adopted AI drafting tools in early 2025. Within months, some documentation teams shrank significantly. Workers survived cuts based on tenure and institutional knowledge rather than skills. The younger employees, the ones with years of making themselves smaller so someone else’s ego could fit in the room, were often the first ones gone. Same exposure score, more or less. Radically different outcome.

The variable that separates these stories has a name, though it sounds like something from a microeconomics textbook because it is: price elasticity of demand. When AI makes a service cheaper or faster to produce, what happens to demand for that service? If demand surges because the lower price attracts new buyers, jobs can actually increase. If demand barely moves because the market was already saturated, the same productivity gains just eliminate positions.

For legal research, cheaper service opened a floodgate of small firms and solo practitioners who’d been priced out. Demand expanded. For technical writing at a company with a fixed number of products, cheaper documentation didn’t create more products to document. Demand held flat. The productivity gains went straight to the bottom line, and the silence after the layoff announcement communicated everything managers didn’t say out loud.

This distinction is not theoretical. It is the single most important variable in determining whether AI creates jobs or destroys them in any given industry. And the data to measure it barely exists.

Economists have described the situation with rare bluntness. “We need, like, a Manhattan Project to collect this,” one researcher said, referring to price elasticity data across professional services and industries. Research institutions partner with supermarkets to get price scanner data for items like cereal and milk. For grocery staples, the relationship between price drops and demand shifts is well documented. For accounting services, marketing strategy, architectural drafting, software testing, translation, and thousands of other professional activities? The data simply isn’t there.

The absence creates a vacuum. And vacuums get filled by whoever speaks loudest.

Right now, the loudest voices belong to AI company executives making extraordinary claims about their own products. Industry leaders have made sweeping predictions about AI capabilities in coming years. Researchers at AI companies have warned of potential recession and a “breakdown of the early-career ladder.” These projections circulate through social media with the force of prophecy, picked up by platforms that manufacture urgency and sell it back as news. And when workers see exposure figures on their phones at 11 PM, they don’t have the context to know what it means. Nobody gave them the other half of the equation.

Economists are slowly coming around to the idea that AI poses genuine risks to employment. That shift matters. For years, the professional consensus dismissed automation fears by pointing to historical patterns: new technology always created more jobs than it destroyed, eventually. That optimism is fraying. But even as economists take the threat more seriously, groups like the Yale Budget Lab have cautioned that the current state of evidence remains thin. The models being used to predict workforce disruption are operating on incomplete inputs. They are, in a meaningful sense, guessing.

Consider what this means for workers learning that their agencies will be restructuring around AI-assisted workflows. Some are offered choices: retrain into new roles with pay cuts, or take severance packages. Decisions are made partly because of student loans and partly because people don’t know what else to do. They don’t choose based on data about whether demand for their services will grow or shrink as AI makes them cheaper. No one can give them that data. It doesn’t exist in any usable form.

These decisions are made in a fog, and workers know it. The fog is the point.

The asymmetry of information here is stark. AI companies collect enormous amounts of behavioral data. Anthropic analyzed millions of conversations with its Claude chatbot to identify which tasks people are using AI to complete. That data tells companies which job functions are vulnerable. But it doesn’t tell workers or policymakers what happens next. The companies building the tools that reshape labor markets have granular, real-time data on how their products are being used. The people whose careers depend on understanding these shifts have almost nothing.

This is not a conspiracy. It is a structural failure of measurement. The federal government tracks unemployment claims, job openings, and wage data. It does not systematically track how price changes in professional services affect demand across sectors. The instruments of economic observation were built for a different era, one where the primary concern was factory automation and the relevant data points were manufacturing output and import competition. The current wave of AI doesn’t automate factories. It automates cognitive tasks, creative tasks, relational tasks. And the economic infrastructure for understanding what that means simply hasn’t caught up.

Some states have begun to pause data center construction in a reflexive attempt to slow the AI transition. Whether that’s wisdom or futility depends entirely on the data we don’t have. If AI-driven productivity gains in, say, healthcare administration would unlock massive unmet demand for medical services in underserved areas, then slowing the rollout costs lives. If those same gains would merely allow hospital systems to cut administrative staff while maintaining the same patient load, the calculus reverses. Without elasticity data, both arguments are equally plausible and equally unsupported.

The tech industry’s favorite experts haven’t filled this gap. Researchers analyzing AI’s workforce impact have been careful to caveat their findings, and none of them claim to have reliable long-term predictions. But caveats don’t travel well. The headline version of their work, stripped of uncertainty and wrapped in apocalyptic framing, is what reaches workers. The nuance dies in transmission. When the door opens, the hard questions don’t get asked.

What remains is a population of workers making career decisions, accepting retraining programs, negotiating severance packages, and setting boundaries that feel like betrayals of their former selves, all based on exposure scores that measure the wrong thing. The scores tell you which jobs AI can touch. They don’t tell you whether that touch will be a handshake or a chokehold. The difference depends on demand, and demand depends on price, and price elasticity data for professional services is functionally nonexistent across most of the economy.

Workers making one of the most consequential calculations of their working lives need to know whether cheaper services in their field create more buyers or just fewer positions. And that number, the one that would actually inform their decisions, is a number nobody has bothered to collect.

The anxiety millions of workers feel about AI is real. The predictions fueling that anxiety are built on half the evidence. And the missing half is not obscure or theoretical. It is concrete, measurable, and collectible. It has just never been a priority.

That’s the gap no algorithm can close. Not a gap in technology. A gap in knowing what questions to ask about it, and caring enough about the answers to fund the work. The data that would turn guessing into planning, that would let workers make informed choices about their own futures, sits uncollected. Waiting. While the people who need it most scroll their phones at night, reading percentages that illuminate nothing.